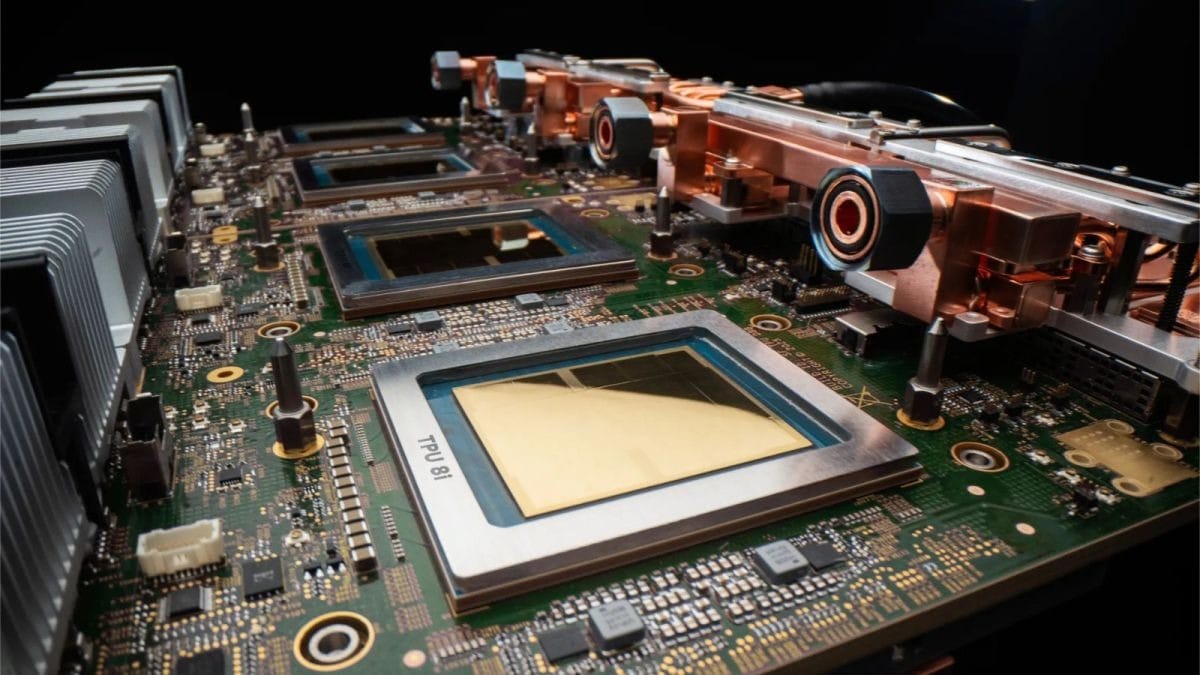

Google unveiled its eighth-generation TPU lineup at Google Cloud Next 2026 on April 22, positioning itself directly against Nvidia's dominance in AI infrastructure. The TPU 8t handles massive training workloads whilst the TPU 8i targets inference with 80% better performance per dollar than the previous Ironwood generation. For UAE enterprises planning AI deployments, this represents the first credible alternative to Nvidia's premium-priced chips.

Key Takeaways

- Google unveiled TPU 8t for training and TPU 8i for inference on April 22, 2026.

- TPU 8i delivers 80% better inference performance per dollar than previous generation.

- TPU 8t superpods scale to 9,600 chips delivering 121 exaflops of compute.

- Anthropic signed a multi-billion-dollar deal for up to 1 million TPUs.

- New Axion VMs claim 30% better price-performance than rival cloud providers.

What makes TPU 8t different from previous generations?

The TPU 8t specialises in massive training workloads with 9,600 chips per superpod delivering 121 exaflops of compute power. According to Google, the architecture scales near-linearly to one million chips via the Virgo Network, with 10x faster storage access than Ironwood. This matters because most AI training hits storage bottlenecks before compute limits — a problem Google claims to have solved.

The real breakthrough isn't raw power but efficiency. Google reports 2.7x better training price-performance and up to 2x better performance-per-watt versus the previous generation. For UAE data centres dealing with high cooling costs, that efficiency gain translates directly to operational savings.

The thing is, training at this scale has been Nvidia's moat. Google's ability to scale superpods to million-chip clusters without performance degradation directly challenges Nvidia Rubin's per-chip advantages with superior cluster-scale architecture.

How TPU 8i tackles inference bottlenecks

The TPU 8i addresses inference with 288GB HBM memory plus 384MB SRAM — three times the memory of previous generations. This breaks what Google calls the 'memory wall' that throttles low-latency inference for mixture-of-experts models like GPT-4 and Claude.

The 80% price-performance improvement over Ironwood specifically targets real-time applications: chatbots, fraud detection, recommendation engines. For UAE fintech and e-commerce companies running customer-facing AI, this could democratise capabilities previously exclusive to tech giants.

What Google doesn't mention is deployment complexity. Inference optimisation requires rewriting models for TPU architecture — not the plug-and-play migration that Nvidia's CUDA ecosystem offers. UAE enterprises need to factor integration costs against the performance savings.

Axion VMs bring Arm to enterprise cloud

Google's new Axion-based 4A virtual machines represent the company's Arm CPU push, claiming 30% better price-performance for agent workloads compared to rival hyperscalers. The VMs double CPU host density per server with NUMA isolation, reducing infrastructure footprint.

This matters for UAE's edge computing initiatives, particularly Dubai's smart city projects where space and power constraints favour efficient architectures. Arm's inherent power efficiency aligns with the region's sustainability goals whilst reducing operational costs.

The Axion launch echoes Amazon's Graviton success but arrives years later. Google's advantage lies in integrated optimisation with TPU workloads — something AWS and Microsoft can't match with their mixed Intel/AMD/Arm portfolios. For enterprises already committed to Google's UAE partnerships, this creates compelling stack consolidation opportunities.

What this means for UAE AI strategy

The TPU 8 launch directly supports UAE's AI Strategy 2031 by providing sovereign AI infrastructure options within the Dubai Cloud region. ADNOC, Emirates NBD, and other UAE enterprises can now train and deploy AI models locally without relying on US-based Nvidia hardware amid ongoing chip export restrictions.

Google's diversified supply chain — spanning Broadcom, MediaTek, Marvell, and Intel — reduces single-point-of-failure risks that plague Nvidia's Taiwan-centric manufacturing. For strategic UAE sectors like energy and finance, this supply chain resilience matters as much as performance.

The timing aligns with broader digital transformation trends accelerating across the region. UAE companies planning AI deployments now have credible alternatives to Nvidia's premium pricing, potentially reducing total cost of ownership by 40-50% for large-scale deployments.

How TPU 8 compares to Nvidia and AWS

Against Nvidia Rubin, TPU 8 trades per-chip performance for cluster-scale advantages. Rubin delivers 35 petaFLOPS FP4 per chip with 288GB HBM4 at 22TB/s — impressive specs that matter less when training requires thousands of interconnected chips. Google's Virgo Network maintains performance scaling that Nvidia's InfiniBand struggles to match at million-chip scales.

AWS Trainium takes a similar inference-focused approach to TPU 8i but lacks Google's training capabilities. Amazon's fabric shift acknowledges the same inference bottlenecks Google targets, but without integrated training-to-inference workflows that Google's unified architecture enables.

The real competition isn't technical specifications but ecosystem lock-in. Nvidia's CUDA dominance means most AI engineers know its tools. Google counters with JAX, PyTorch integration, and simplified XLA compilation — but the learning curve remains steeper than Nvidia's established workflows.

TPU 8 availability and enterprise access

Google hasn't announced specific pricing for TPU 8t and 8i chips, following its typical approach of custom enterprise negotiations rather than public rate cards. General availability is expected later in 2026, with early access programmes beginning in Q3 2026.

UAE enterprises can access TPUs through Google Cloud's Dubai region, which provides low-latency connectivity and data residency compliance for regulated industries. Anthropic's multi-billion-dollar commitment for up to one million TPUs signals confidence in production readiness, whilst Meta's rental agreement suggests competitive pricing versus Nvidia alternatives.

Current Ironwood TPUs remain available with millions of units shipping throughout 2026. Google's roadmap includes TPU v8 transitioning to TSMC's 2nm process in 2027, indicating sustained investment in silicon independence from Nvidia's ecosystem.

Frequently Asked Questions

When will Google TPU 8 chips be available in UAE?

General availability is expected later in 2026, with early access programmes beginning in Q3 2026. UAE enterprises can access them through Google Cloud's Dubai region for data residency compliance.

How much better is TPU 8i than previous generation?

TPU 8i delivers 80% better inference performance per dollar compared to Ironwood TPUs, with 3x more memory and optimised architecture for low-latency applications like chatbots and real-time AI.

Can TPU 8 replace Nvidia chips for UAE AI projects?

For new deployments, yes — TPU 8 offers competitive performance with better cluster scaling. However, existing Nvidia-based projects may face integration costs when migrating due to different software ecosystems and optimisation requirements.

What makes Axion VMs better for UAE enterprises?

Axion VMs claim 30% better price-performance for agent workloads and double server density, reducing power consumption and data centre footprint — critical advantages for UAE's high cooling costs and space constraints.

Subscribe to our newsletter to get the latest updates and news

Member discussion